It's not an academic concept. It's something that happens in real-time — in Slack channels, in QBR decks, in RevOps budget reviews. It's the act of trusting what AI delivers without any meaningful validation of whether it maps to reality.

You run a prompt, you get output, and because it looks plausible and arrived in milliseconds, you treat it as ground truth.

Mysticism in the traditional sense means belief without empirical evidence — faith as a knowledge system. AI mysticism is the same thing, just wearing a dashboard. You're not praying. You're just running a query and choosing not to interrogate the answer.

"The question isn't whether AI-built tools can work. They can. The question is whether you have a process to validate them — or whether you're just believing."

The shift that's actually happening

Right now, across go-to-market teams, there is a very real migration underway. Teams are evaluating whether to keep paying for established data and intelligence platforms — the Similarwebs, the Bomboras, the ZoomInfos, the specialized technographic and intent layers — or to replace them with internal tools built on Claude or ChatGPT by a go-to-market engineer or ops team.

The economics seem obvious. A technographic data subscription might cost $2,000–$5,000/month. A Claude API subscription plus a few hours of engineering time gets you something that does a version of the same thing for $30. That's a compelling spreadsheet number.

But here's what the spreadsheet doesn't capture: the established tool has been validated. Methodologically, at scale, over time. The homegrown AI tool has been vibed into existence by someone who's good at prompting.

A concrete example: NAICS and SIC code lookups

We recently built and launched a free NAICS + SIC code lookup tool at LeadGenius. It's fast, it's useful, and it costs nothing to use.

NAICS + SIC Code Researcher

Free AI-powered industry code lookup — search by company, description, or keyword

leadgenius.com/apps/naics-sic-code-researcher ↗But I'm also the first person to acknowledge the honest tradeoff. If you were previously using a validated data provider to append NAICS and SIC codes to your accounts at scale — with a methodology stress-tested against the Census Bureau, against real company data, against edge cases — you had something our free tool does not yet have: a proof of accuracy track record.

Our tool is useful. It's directionally accurate for most common use cases. But "directionally accurate for most common use cases" is a very different thing than "94% accurate across 2.3 million records with documented methodology." The latter is what you were paying for.

AI mysticism kicks in when a team uses a tool like ours — or builds their own — and simply assumes the output is right. No spot-check. No control set. No comparison against a known-accurate dataset. Just: prompt in, code out, data appended, campaign launched.

What you're actually trading

Let's be precise about what's on each side of this trade.

| Proven SaaS Tool | Homegrown AI Tool |

|---|---|

| Established methodology | Fast to build, cheap to run |

| Documented accuracy rates | Accuracy unknown or assumed |

| Scale-tested against real data | Tested on a handful of examples |

| Vendor accountable for errors | Internal team owns all risk |

| Years of refinement | Prompt engineering = methodology |

| Audit trail for compliance | No external validation |

Neither column is inherently winning. The problem is when teams look only at the cost row and declare victory without examining what moved off the ledger.

The four forms of validation debt

When you replace a validated tool with an unvalidated one, the cost doesn't disappear — it defers. Here's where it typically surfaces:

Targeting Debt

Your ICP segmentation is built on industry codes, firmographics, and technographic signals that were never verified. You're running paid media against accounts that don't actually match your ICP — you just think they do because an AI told you so.

Pipeline Quality Debt

Bad signal in, bad pipeline out. If your intent data or technographic enrichment is inaccurate, you're burning rep time on accounts that were never good fits. This shows up as declining connect rates, low conversion from MQL to SQL, and reps who lose faith in the data.

Compliance Debt

Validated data providers typically carry certifications, privacy agreements, and audit trails. Your internal AI tool doesn't. If you're in a regulated industry or selling to enterprise buyers with procurement scrutiny, this becomes a real risk surface — especially around data provenance.

Trust Debt

This one is subtle but lethal. Once your sales team or leadership loses confidence in your data quality, you lose the organizational willingness to invest in data-driven programs at all. "The data is garbage" becomes a refrain that haunts campaigns for years.

This isn't an argument against AI tools

Let me be clear: I am not arguing that AI-built tools are inferior or that the established vendors are untouchable. That's not the point.

The point is that the shift from proven solutions to homegrown AI tools is a real trade, and it deserves real scrutiny. The cost savings are genuine. The validation gap is also genuine. Both things are true simultaneously, and treating only one of them as real is AI mysticism in action.

The teams who will do this well are the ones who build validation into the adoption process. They don't just ask "can we build it?" They ask "how will we know if it's working?" They run their AI outputs against a control sample of known-accurate data. They spot-check at a rate proportional to how much they're relying on the output. They assign someone to own data quality — not just data volume.

The $30 tool can absolutely outperform the $3,000 tool. But that outcome has to be proven, not assumed. The moment you assume it, you've left the domain of data and entered the domain of mysticism.

What good validation looks like in practice

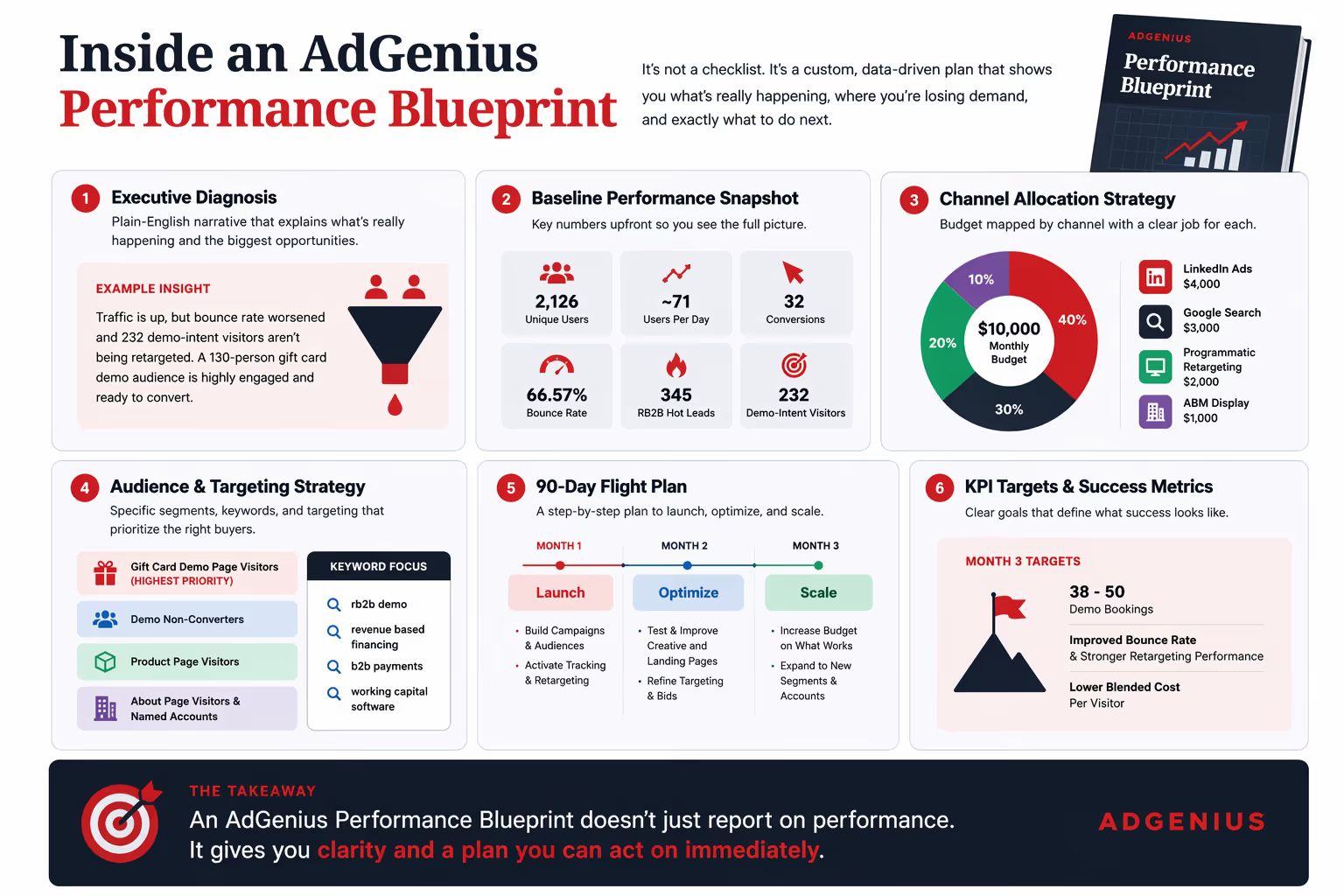

If you're building or adopting AI-native tools to replace validated data sources, here's the minimum viable validation framework:

- Build a control set. Pull 200–500 records where you already know the correct answer — industry codes, tech stack, intent signals, whatever you're enriching. Run your AI tool against them. Measure accuracy. If you can't do this, you're flying blind.

- Set an accuracy threshold before you deploy. Don't decide post-hoc whether the accuracy is "good enough." Decide in advance what acceptable looks like, then measure against it. For most commercial use cases, anything below 85% accuracy on a categorical enrichment is a problem worth knowing about before you build campaigns on top of it.

- Build ongoing monitoring. Models drift. Prompts break. Data sources shift. A validation that was true in January may not be true in Q3. If your AI enrichment tool has no monitoring layer, you will eventually be running campaigns on stale, degraded signal without knowing it.

- Keep the receipt. Don't fully cancel the tool you're replacing until your AI alternative has cleared a meaningful validation window — at minimum 60–90 days with real campaign data. The savings are real, but so is the reversal cost if you discover the accuracy problem after you've already burned pipeline.

The honest version of the pitch

When I talk about our NAICS + SIC code lookup tool, the honest pitch is this: it's genuinely useful for quick research, one-off lookups, small batches, and getting directional signal fast. It's free. It works. Use it.

If you are running large-scale programmatic enrichment across tens of thousands of accounts that will feed your ICP targeting, your TAM modeling, and your paid media segmentation — you need to validate whatever tool you're using, including ours, before you trust it at that scale. That's not a caveat. That's just what responsible data operations looks like.

The uncomfortable reality of AI mysticism is that it feels like rigor. You ran a process. You got output. The output looks structured and confident. But confidence is not accuracy, and process is not validation. The gap between those two things — that's where the cost lives.

The best AI-native GTM teams will be the ones who treat "built with AI" as the starting point of a validation conversation, not the end of one. The ones who still ask: how do we know?