Why I put this report together

This wasn’t supposed to become a report about MQLs and MQAs.

I’m a CRO who ended up acting a bit like an internal startup founder, building a paid media optimization product and spending a lot of time talking with demand gen leaders about fit: who this platform is actually for, what problems matter most to them, and how they measure success.

Over and over, one theme kept resurfacing. In conversation after conversation, enterprise marketers would tell me some version of: “We’re trying to get away from MQLs and move toward MQAs.” The framing was usually pretty binary. MQLs were treated like outdated baggage. MQAs were presented as the modern answer.

But the more I talked to teams that were supposedly further along in that transition, the less convinced I became that they had actually solved anything. They often had better-looking dashboards, more expensive software, and a lot more terminology. What they didn’t always have was clarity. When I pushed on a simple question — which programs are actually generating pipeline? — the answers were often fuzzy.

That disconnect is what pushed me into the research.

Over the last week, I dug through analyst research, practitioner blogs, Reddit discussions, LinkedIn arguments, vendor content, survey data, and podcast transcripts to understand what is really true here, not just what the market keeps repeating.

The conclusion was not what the loudest voices in B2B marketing would have you believe.

MQLs are not some extinct relic. MQAs are not a magic upgrade. The reality is messier, more situational, and far more shaped by vendor incentives than most people realize. The right framework depends on your deal economics, the number of stakeholders involved, the length of the buying cycle, and the way your GTM engine actually works.

That is what this report is about. I’m publishing it because I think a lot of revenue leaders are trying to sort through the exact same noise.

A quick note on neutrality: I do not sell an ABM platform. I do not sell MAP software. I do not have a financial stake in whether a team uses MQLs, MQAs, both, or neither. That distance made it easier to follow the evidence instead of defending a category.

The headline takeaways

A few findings stood out immediately.

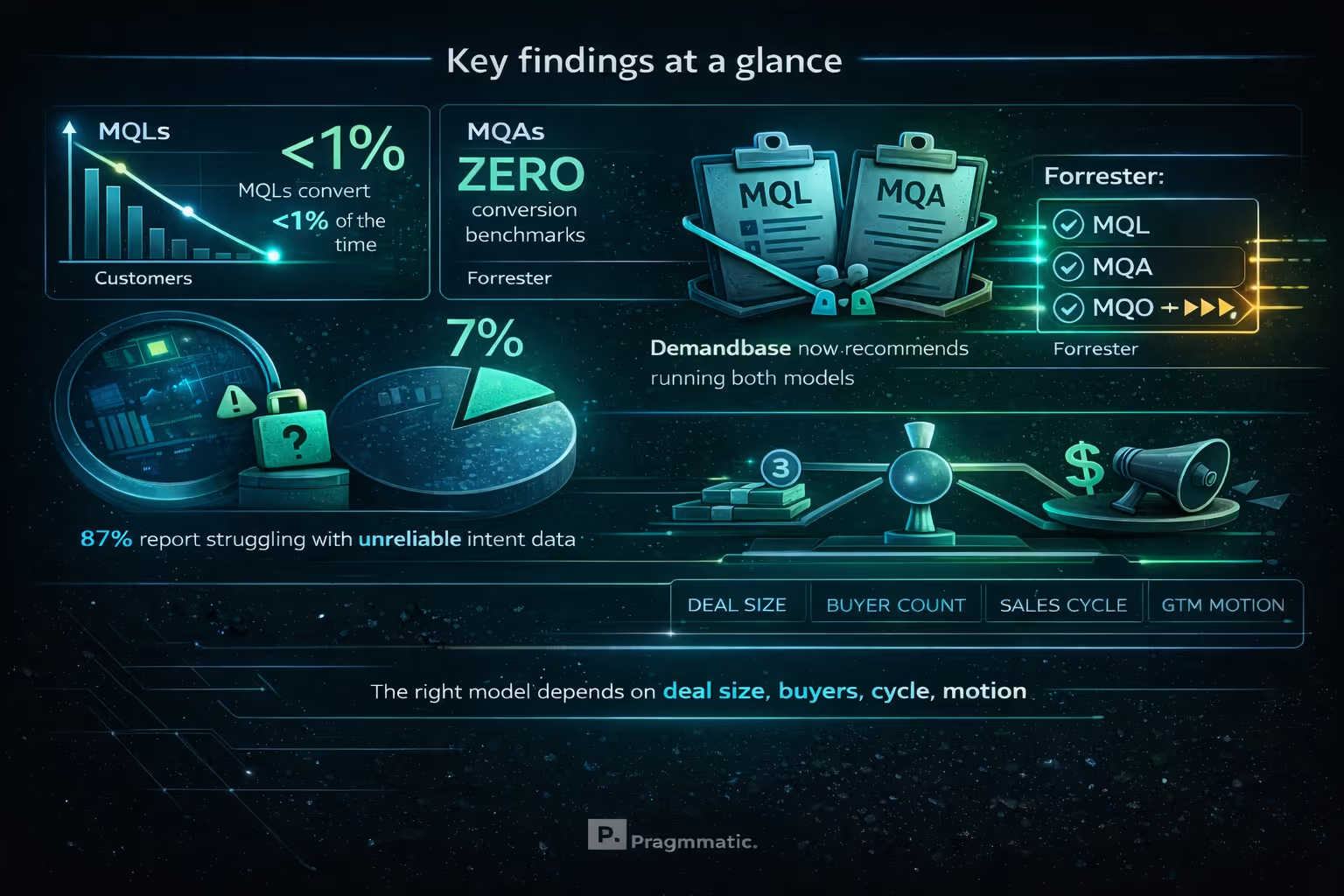

MQLs have ugly conversion rates to closed-won business, often under 1%, but MQAs do not have publicly available benchmark data proving they outperform them.

A huge portion of the MQA story depends on intent data, yet most B2B teams report that intent signals are inconsistent or misleading.

Despite the amount of industry rhetoric around MQAs, very few demand gen teams actually use them as their primary performance metric.

Even Demandbase, one of the vendors most associated with the rise of MQAs, now advises teams to run MQL and MQA motions side by side rather than treating one as a replacement for the other.

Forrester’s position is even more interesting: it argues that neither MQL nor MQA is really sufficient, and points instead toward a buying-group or opportunity-based framework.

The biggest variable is not which acronym sounds more modern. It is whether your measurement model matches your selling motion.

And one more thing became hard to ignore: the “MQL is dead” narrative has been heavily amplified by companies that happen to make money selling the alternative.

Part 1: The critique of MQL is real — and it largely comes from insiders

The strongest attack on the MQL model does not come from random LinkedIn hot takes. It comes from people who helped create the system in the first place.

Some of the architects of modern lead management have openly acknowledged that the machine they helped build became detached from revenue reality. The result is a system where marketing automation vendors, lead scoring tools, syndication providers, and agencies all benefit from producing more MQLs, even when that activity has weak correlation to actual pipeline.

That criticism usually falls into three buckets.

First, there is a structural problem. B2B purchases are no longer driven by a single hand-raiser. In enterprise, buying decisions often involve large committees, multiple departments, and a long timeline. One person downloading a whitepaper rarely tells you much about whether an organization is actually moving toward a purchase.

Second, there is an operational problem. Sales teams have complained for years that too many MQLs are low intent, poorly timed, or irrelevant. Huge volumes of activity can create the illusion of momentum while producing very little real pipeline.

Third, there is a financial problem. Teams can hit their lead goals and still miss revenue. That gap is not theoretical. It shows up over and over when lead volume becomes the target rather than a means to business outcomes.

The benchmark data behind these critiques is brutal. MQL-to-customer conversion is extremely low. Sales and marketing often do not even agree on what qualifies as a lead. Content-driven leads make up the majority of MQL volume, yet those leads often convert terribly. Meanwhile, many buyers have already narrowed their vendor preference long before they ever talk to sales.

So yes, the case against poorly run MQL programs is very strong.

Part 2: MQA inherits many of the same weaknesses — and lacks proof

This is where the industry conversation starts to get slippery.

The central criticism of MQLs is that individual engagement does not equal purchase intent. Fair enough. But that same logic applies to MQAs. Just because multiple people at the same company are consuming your content or showing signs of engagement does not automatically mean a deal is taking shape.

That is the uncomfortable truth.

Forrester’s critique is especially sharp here. The problem with MQLs is that they can be too narrow, too person-specific, and disconnected from the broader buying reality. The problem with MQAs is that they can swing too far in the other direction. The account becomes the unit of analysis, but an account is not a decision-maker. It is just a legal entity. Companies do not buy things. People inside companies buy things for specific reasons, with specific priorities, tied to specific initiatives.

That distinction matters.

There are a few recurring failure modes with MQA.

One is compression. A single account-level score can flatten multiple separate buying initiatives into one signal. If several people at one company are researching different products or different use cases, the score may rise without telling anyone what is actually happening.

Another is ambiguity. Sales teams see “engaged account” flags but do not know what to do next. Who is involved? What topic are they interested in? Is this one opportunity or several? Is it early-stage curiosity or active evaluation?

The third is loss of context. A number without narrative is hard to operationalize. High engagement can mean many things, and without specificity, the actionability drops fast.

The most revealing gap in all of this is the evidence gap. MQLs, for all their flaws, at least have established benchmark ranges that people can evaluate. MQAs, despite the excitement surrounding them, do not have the same publicly available, independently validated conversion benchmarks tied to closed-won revenue.

That matters more than most people admit.

A model can sound sophisticated, but until it proves it drives better business outcomes, sophistication is not the same thing as superiority.

Part 3: The shaky foundation beneath intent-driven models

A lot of the MQA promise rests on intent data, especially third-party intent. That is a problem, because intent data itself is far less reliable than the market narrative suggests.

Many teams report that intent signals are noisy, late, or misleading. In some cases, what looks like strong intent turns out to be little more than a reflection of the company’s own campaigns and outbound activity feeding back into the system. The signal starts to validate itself. You increase outreach because intent appears high, that outreach creates more engagement, and that engagement then makes the original score look accurate.

That loop can become an echo chamber.

This does not mean intent data is worthless. It means teams should treat it carefully, especially if they are building major process changes or six-figure software investments around it. If the underlying signal is inconsistent, everything sitting on top of it gets shakier too.

Put differently: an account scoring layer is only as good as the quality of the signal beneath it. For a lot of teams, that signal is far from dependable.

Part 4: Why MQA is growing anyway

The rise of MQA is not just vendor hype. It is partly a response to a real market shift.

Buying has changed. More stakeholders are involved. Deals take longer. Large portions of buying journeys now happen anonymously. The classic “one person fills out a form, sales follows up, deal progresses” model simply does not describe many enterprise purchases anymore.

That is the real opening MQA addresses. It tries to capture broader account engagement in situations where individual lead-centric measurement breaks down.

But there is another force at work too: vendors have been highly effective at turning that real market gap into a category story.

When you sell ABM software, it is convenient if the market believes legacy lead models are fundamentally broken and must be replaced by account-level measurement. That does not make the argument false. It does mean it should be evaluated with a little more skepticism.

Plenty of practitioners have started expressing fatigue with the promise-versus-reality gap. The story sounds elegant at conferences and on LinkedIn. The lived experience inside teams often looks messier: expensive platforms, uneven adoption, questionable data quality, and sales teams that do not consistently use what marketing bought.

One of the more telling signals is that even the vendors who helped drive MQA adoption have softened the replacement narrative. The move now is less “throw out MQLs” and more “run parallel models.”

That shift says a lot.

Part 5: What people say publicly versus how teams actually operate

This may be the most revealing part of the whole debate.

Publicly, the narrative sounds decisive. “MQL is dead.” “The future is MQA.” “Modern demand gen is account-based.” The language is confident, almost moralistic.

Operationally, many teams behave very differently.

When it is time to report performance to the CEO, CFO, or board, companies still fall back on metrics that look a lot like the old ones: leads, conversions, cost per opportunity, sourced pipeline, closed-won revenue. Those measures may be imperfect, but they are concrete, legible, and tied to how the business understands growth.

That gap between public rhetoric and internal reporting tells you something important. A lot of teams may admire the account-based narrative while still running their business on lead-based economics.

That does not mean those teams are backward. It may simply mean they are being honest about what their systems and buying motion can actually support.

Part 6: The defense of MQL is stronger than the discourse suggests

The pro-MQL case rarely gets treated fairly anymore, but it deserves more credit than it gets.

A big part of the problem is that many organizations built weak MQL programs and then concluded the metric itself was the issue. In reality, a lot of poor MQL performance comes from poor sourcing, poor definitions, and poor instrumentation.

Not all MQLs are created equal.

An inbound demo request, a pricing-page conversion, or a strong hand-raise from a well-matched account is not the same thing as a low-intent content lead gathered through syndication or generic nurture. Teams that lump all of that into one bucket and then declare “MQLs don’t work” are usually masking a quality problem, not diagnosing a measurement problem.

There is also an argument that many of the best modern ABM practices are really just better-run versions of lead qualification. Start with the right account list. Focus on the right buying roles. Track meaningful engagement. Route intelligently. That does not have to be framed as some total break from MQL thinking. In many cases, it is simply a more disciplined version of it.

That is why the “MQL is dead” line feels overstated. Plenty of companies still rely on MQLs because, for their motion, MQLs are still useful. Not glamorous. Not trendy. Useful.

Part 7: The model should fit the business

The biggest problem with the MQL-vs-MQA debate is that it implies there is one correct answer for everyone.

There is not.

The better question is which model fits your business. Four factors matter most.

The first is deal size. Lower-ACV sales, especially those with a single or near-single decision-maker, tend to map more naturally to lead-centric measurement. Higher-ACV enterprise sales usually require a broader account or buying-group view.

The second is stakeholder complexity. If one or two people make the decision, tracking the person makes sense. If the purchase involves a web of champions, users, economic buyers, and influencers, you need something broader than individual lead activity.

The third is sales cycle length. In fast-moving cycles, lead signals can remain actionable. In long cycles, those same signals can decay long before the buying process resolves.

The fourth is GTM motion. Product-led growth has pushed some companies not toward MQAs, but toward PQLs. High-volume inbound motions often remain compatible with MQLs. High-touch ABM motions usually need account or buying-group overlays.

In other words, the right model is contingent. It depends on how revenue is actually generated in your business, not on which acronym is winning the content war.

For many organizations, especially those selling across segments, the best answer may be parallel paths rather than ideological purity.

Part 8: The more rigorous alternative is probably MQO — but most teams are not there yet

The most thoughtful analyst view in this whole conversation may be that both MQL and MQA are incomplete.

The more precise lens is not a lead or even an account. It is a buying group pursuing a specific opportunity.

That framework is often described as MQO: Marketing Qualified Opportunity.

The logic is stronger than either of the older models. Deals are not won by “accounts” in the abstract, and they are rarely explained by a single lead score. They are won when a defined group of stakeholders inside a company moves around a specific initiative with enough urgency, consensus, and commercial potential to create a real sales opportunity.

That is a better unit of analysis.

The issue is practicality. Most teams are not operationally set up to track buying groups and opportunity-level progression with that kind of rigor. The systems, processes, and organizational alignment required are not trivial.

So while MQO may be the cleanest conceptual model, the practical reality is that many teams will live in hybrid territory for a long time.

Conclusion: the metric is not the strategy

The real lesson here is that the MQL-versus-MQA argument is not actually about acronyms. It is about fit.

MQLs break when they are forced onto complex enterprise buying motions they were never designed to represent. MQAs break when they are treated as inherently smarter, despite limited proof and unclear actionability. Both fail when teams confuse measurement with strategy.

A few conclusions feel hard to escape.

First, the anti-MQL narrative has absolutely been amplified by vendors who benefit from selling the replacement. That does not automatically invalidate the critique, but it should change how heavily you weight the loudest voices.

Second, the lack of publicly validated MQA conversion benchmarks is a major hole in the argument. Until those numbers exist, claims of superiority remain more theoretical than proven.

Third, the most rigorous future-facing model is probably buying-group or opportunity-based measurement, but most teams will not operationalize that cleanly anytime soon.

So the question is not whether MQLs or MQAs are “better.”

The question is whether your measurement approach reflects how your buyers actually buy — and whether your team has the operational maturity to run that model well.

That is the real work.